If You Calculate an F Statistic and Find That It Is Negative

Main Torso

Affiliate 6. F-Test and One-Way ANOVA

F-distribution

Years ago, statisticians discovered that when pairs of samples are taken from a normal population, the ratios of the variances of the samples in each pair will always follow the same distribution. Not surprisingly, over the intervening years, statisticians have found that the ratio of sample variances collected in a number of dissimilar ways follow this same distribution, the F-distribution. Because nosotros know that sampling distributions of the ratio of variances follow a known distribution, we can carry hypothesis tests using the ratio of variances.

The F-statistic is just:

[latex]F = due south^2_1 / due south^2_2[/latex]

where sone 2 is the variance of sample i. Remember that the sample variance is:

[latex]s^2 = \sum(x - \overline{ten})^2 / (northward-1)[/latex]

Think well-nigh the shape that the F-distribution will have. If due south1 two and southtwo 2 come up from samples from the aforementioned population, and then if many pairs of samples were taken and F-scores computed, most of those F-scores would be close to one. All of the F-scores volition exist positive since variances are always positive — the numerator in the formula is the sum of squares, and then information technology will exist positive, the denominator is the sample size minus one, which will also be positive. Thinking about ratios requires some care. If due southone 2 is a lot larger than s2 2 , F can exist quite large. It is equally possible for sii 2 to be a lot larger than s1 2 , and then F would be very shut to cypher. Since F goes from zero to very big, with most of the values effectually i, it is evidently non symmetric; there is a long tail to the right, and a steep descent to goose egg on the left.

In that location are two uses of the F-distribution that will be discussed in this chapter. The first is a very simple test to see if two samples come from populations with the same variance. The second is one-way analysis of variance (ANOVA), which uses the F-distribution to test to see if 3 or more samples come from populations with the same mean.

A simple test: Do these two samples come from populations with the aforementioned variance?

Because the F-distribution is generated by cartoon two samples from the aforementioned normal population, it can be used to exam the hypothesis that ii samples come from populations with the same variance. Yous would accept two samples (1 of size n1 and ane of size ntwo ) and the sample variance from each. Obviously, if the two variances are very shut to existence equal the two samples could hands have come from populations with equal variances. Because the F-statistic is the ratio of ii sample variances, when the two sample variances are shut to equal, the F-score is close to one. If y'all compute the F-score, and it is shut to one, you accept your hypothesis that the samples come from populations with the aforementioned variance.

This is the bones method of the F-test. Hypothesize that the samples come from populations with the same variance. Compute the F-score past finding the ratio of the sample variances. If the F-score is close to one, conclude that your hypothesis is correct and that the samples practise come from populations with equal variances. If the F-score is far from one, so conclude that the populations probably accept different variances.

The basic method must be fleshed out with some details if yous are going to use this test at work. There are two sets of details: get-go, formally writing hypotheses, and second, using the F-distribution tables so that you lot can tell if your F-score is shut to one or not. Formally, two hypotheses are needed for completeness. The showtime is the null hypothesis that there is no deviation (hence nada). It is usually denoted as Ho . The 2nd is that at that place is a difference, and it is chosen the alternative, and is denoted H1 or Ha .

Using the F-tables to decide how shut to one is close enough to accept the zippo hypothesis (truly formal statisticians would say "fail to reject the null") is adequately tricky because the F-distribution tables are fairly catchy. Before using the tables, the researcher must make up one's mind how much take a chance he or she is willing to have that the zilch will exist rejected when information technology is actually true. The usual option is 5 per cent, or as statisticians say, "α – .05″. If more than or less chance is wanted, α can be varied. Choose your α and become to the F-tables. First notice that there are a number of F-tables, one for each of several unlike levels of α (or at least a table for each two α's with the F-values for one α in bold type and the values for the other in regular blazon). There are rows and columns on each F-table, and both are for degrees of freedom. Because two dissever samples are taken to compute an F-score and the samples do not have to exist the same size, there are two divide degrees of freedom — one for each sample. For each sample, the number of degrees of liberty is n-1, 1 less than the sample size. Going to the table, how do yous decide which sample'due south degrees of liberty (df) are for the row and which are for the cavalcade? While y'all could put either one in either place, y'all can save yourself a pace if you lot put the sample with the larger variance (not necessarily the larger sample) in the numerator, and and then that sample'south df determines the cavalcade and the other sample's df determines the row. The reason that this saves you a step is that the tables only show the values of F that leave α in the right tail where F > 1, the picture at the top of most F-tables shows that. Finding the critical F-value for left tails requires another step, which is outlined in the interactive Excel template in Figure vi.1. Simply modify the numerator and the denominator degrees of freedom, and the α in the correct tail of the F-distribution in the yellow cells.

Figure 6.one Interactive Excel Template of an F-Table – see Appendix half dozen.

F-tables are almost e'er printed as one-tail tables, showing the disquisitional F-value that separates the correct tail from the rest of the distribution. In nearly statistical applications of the F-distribution, only the right tail is of interest, because most applications are testing to see if the variance from a certain source is greater than the variance from another source, and then the researcher is interested in finding if the F-score is greater than i. In the examination of equal variances, the researcher is interested in finding out if the F-score is close to one, so that either a large F-score or a minor F-score would lead the researcher to conclude that the variances are non equal. Considering the critical F-value that separates the left tail from the residual of the distribution is not printed, and non just the negative of the printed value, researchers often but separate the larger sample variance by the smaller sample variance, and use the printed tables to run across if the quotient is "larger than one", effectively rigging the test into a one-tail format. For purists, and occasional instances, the left-tail critical value can be computed fairly easily.

The left-tail critical value for x, y degrees of freedom (df) is simply the inverse of the right-tail (table) critical value for y, x df. Looking at an F-tabular array, you would meet that the F-value that leaves α – .05 in the correct tail when there are 10, 20 df is F=two.35. To detect the F-value that leaves α – .05 in the left tail with 10, 20 df, look upwards F=2.77 for α – .05, xx, x df. Split up one by ii.77, finding .36. That means that 5 per cent of the F-distribution for 10, 20 df is below the critical value of .36, and 5 per cent is above the disquisitional value of ii.35.

Putting all of this together, here is how to deport the examination to meet if two samples come up from populations with the same variance. First, collect ii samples and compute the sample variance of each, s1 2 and due southtwo 2 . Second, write your hypotheses and choose α . Third find the F-score from your samples, dividing the larger s2 by the smaller and then that F>1. Fourth, get to the tables, find the table for α/ii, and observe the critical (table) F-score for the proper degrees of freedom (n-1 and n-1). Compare it to the samples' F-score. If the samples' F is larger than the disquisitional F, the samples' F is not "shut to ane", and Ha the population variances are not equal, is the best hypothesis. If the samples' F is less than the critical F, Ho , that the population variances are equal, should be accustomed.

Example #1

Lin Xiang, a immature banker, has moved from Saskatoon, Saskatchewan, to Winnipeg, Manitoba, where she has recently been promoted and fabricated the manager of City Banking concern, a newly established bank in Winnipeg with branches across the Prairies. Subsequently a few weeks, she has discovered that maintaining the correct number of tellers seems to be more difficult than it was when she was a co-operative banana director in Saskatoon. Some days, the lines are very long, simply on other days, the tellers seem to have fiddling to do. She wonders if the number of customers at her new branch is only more variable than the number of customers at the branch where she used to piece of work. Because tellers work for a whole mean solar day or one-half a twenty-four hour period (morning or afternoon), she collects the following data on the number of transactions in a half solar day from her co-operative and the branch where she used to piece of work:

Winnipeg co-operative: 156, 278, 134, 202, 236, 198, 187, 199, 143, 165, 223

Saskatoon branch: 345, 332, 309, 367, 388, 312, 355, 363, 381

She hypothesizes:

[latex]H_o: \sigma^2_W = \sigma^2_S[/latex]

[latex]H_a: \sigma^2_W \neq \sigma^2_S[/latex]

She decides to useα – .05. She computes the sample variances and finds:

[latex]southward^2_W =1828.56[/latex]

[latex]southward^2_S =795.19[/latex]

Following the dominion to put the larger variance in the numerator, so that she saves a pace, she finds:

[latex]F = south^2_W/south^2_S = 1828.56/795.nineteen = 2.30[/latex]

Figure 6.2 Interactive Excel Template for F-Test – see Appendix half-dozen.

Using the interactive Excel template in Figure vi.2 (and remembering to use the α – .025 tabular array because the table is one-tail and the test is two-tail), she finds that the critical F for ten,eight df is 4.30. Because her F-calculated score from Effigy half-dozen.2 is less than the critical score, she concludes that her F-score is "close to one", and that the variance of customers in her office is the same equally it was in the former office. She will demand to await further to solve her staffing problem.

Assay of variance (ANOVA)

The importance of ANOVA

A more than important use of the F-distribution is in analyzing variance to encounter if iii or more than samples come from populations with equal means. This is an important statistical examination, not so much because it is ofttimes used, but because it is a bridge betwixt univariate statistics and multivariate statistics and because the strategy it uses is one that is used in many multivariate tests and procedures.

One-way ANOVA: Do these three (or more than) samples all come from populations with the same mean?

This seems wrong — we will test a hypothesis about means by analyzing variance. It is not incorrect, but rather a really clever insight that some statistician had years ago. This idea — looking at variance to discover out about differences in means — is the basis for much of the multivariate statistics used by researchers today. The ideas behind ANOVA are used when we look for relationships between 2 or more variables, the big reason we utilise multivariate statistics.

Testing to see if three or more samples come from populations with the same mean can often be a sort of multivariate exercise. If the three samples came from three different factories or were subject to different treatments, we are effectively seeing if at that place is a divergence in the results because of different factories or treatments — is in that location a human relationship between factory (or treatment) and the effect?

Retrieve about three samples. A group of 10's have been collected, and for some skillful reason (other than their x value) they tin be divided into three groups. You have some x'due south from group (sample) 1, some from group (sample) 2, and some from grouping (sample) three. If the samples were combined, you could compute a grand mean and a total variance around that grand mean. You lot could also detect the mean and (sample) variance within each of the groups. Finally, y'all could have the iii sample means, and observe the variance between them. ANOVA is based on analyzing where the total variance comes from. If you picked one x, the source of its variance, its distance from the grand hateful, would take 2 parts: (1) how far it is from the mean of its sample, and (2) how far its sample's hateful is from the yard mean. If the three samples really practice come from populations with different ways, so for near of the 10's, the distance between the sample hateful and the yard mean will probably exist greater than the distance between the x and its group mean. When these distances are gathered together and turned into variances, yous tin see that if the population means are unlike, the variance betwixt the sample ways is likely to be greater than the variance within the samples.

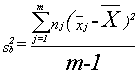

By this signal in the book, it should not surprise y'all to acquire that statisticians have found that if three or more samples are taken from a normal population, and the variance betwixt the samples is divided past the variance inside the samples, a sampling distribution formed by doing that over and over will accept a known shape. In this example, it volition exist distributed like F with g-i, due north–thousand df, where m is the number of samples and n is the size of the 1000 samples altogether. Variance between is found by:

where xj is the mean of sample j, and x is the grand mean.

The numerator of the variance betwixt is the sum of the squares of the altitude between each ten's sample mean and the grand mean. Information technology is simply a summing of one of those sources of variance across all of the observations.

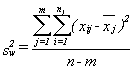

The variance within is found past:

Double sums demand to be handled with care. First (operating on the inside or second sum sign) notice the mean of each sample and the sum of the squares of the distances of each x in the sample from its mean. 2nd (operating on the outside sum sign), add together together the results from each of the samples.

The strategy for conducting a ane-way assay of variance is simple. Get together one thousand samples. Compute the variance between the samples, the variance within the samples, and the ratio of between to inside, yielding the F-score. If the F-score is less than ane, or non much greater than one, the variance betwixt the samples is no greater than the variance within the samples and the samples probably come from populations with the same hateful. If the F-score is much greater than 1, the variance betwixt is probably the source of most of the variance in the total sample, and the samples probably come from populations with unlike means.

The details of conducting a one-mode ANOVA fall into three categories: (1) writing hypotheses, (2) keeping the calculations organized, and (iii) using the F-tables. The naught hypothesis is that all of the population means are equal, and the alternative is that not all of the ways are equal. Quite often, though two hypotheses are really needed for completeness, just Ho is written:

[latex]H_o: m_1=m_2=\ldots=m_m[/latex]

Keeping the calculations organized is important when you are finding the variance inside. Think that the variance within is found by squaring, and then summing, the distance between each observation and the mean of its sample. Though different people do the calculations differently, I observe the best way to go along it all directly is to find the sample means, find the squared distances in each of the samples, and then add those together. It is also important to go along the calculations organized in the final computing of the F-score. If you call up that the goal is to see if the variance between is big, and then its piece of cake to remember to divide variance betwixt by variance within.

Using the F-tables is the 3rd detail. Recall that F-tables are one-tail tables and that ANOVA is a ane-tail examination. Though the null hypothesis is that all of the ways are equal, you lot are testing that hypothesis by seeing if the variance between is less than or equal to the variance within. The number of degrees of freedom is g-i, n–m, where k is the number of samples and n is the total size of all the samples together.

Instance #2

The immature depository financial institution director in Example 1 is still struggling with finding the best style to staff her branch. She knows that she needs to have more than tellers on Fridays than on other days, but she is trying to notice if the need for tellers is constant across the rest of the week. She collects data for the number of transactions each mean solar day for two months. Hither are her information:

Mondays: 276, 323, 298, 256, 277, 309, 312, 265, 311

Tuesdays: 243, 279, 301, 285, 274, 243, 228, 298, 255

Wednesdays: 288, 292, 310, 267, 243, 293, 255, 273

Thursdays: 254, 279, 241, 227, 278, 276, 256, 262

She tests the zilch hypothesis:

[latex]H_o: m_m=m_{tu}=m_w=m_{th}[/latex]

and decides to use α – .05. She finds:

thousand = 291.8

tu = 267.3

w = 277.half-dozen

th = 259.one

and the grand hateful = 274.three

She computes variance within:

[(276-291.eight)ii+(323-291.eight)2+…+(243-267.6)two+…+(288-277.6)2+…+(254-259.1)2]/[34-four]=15887.6/thirty=529.vi

Then she computes variance between:

[9(291.viii-274.3)ii+nine(267.iii-274.iii)2+8(277.6-274.3)two+8(259.1-274.iii)2]/[4-1]

= 5151.viii/3 = 1717.3

She computes her F-score:

Figure 6.3 Interactive Excel Template for 1-Style ANOVA – see Appendix 6.

Y'all can enter the number of transactions each 24-hour interval in the yellow cells in Effigy 6.3, and select the α. Equally you can so see in Figure six.three, the calculated F-value is 3.24, while the F-table (F-Disquisitional) for α – .05 and 3, 30 df, is ii.92. Because her F-score is larger than the critical F-value, or alternatively since the p-value (0.036) is less than α – .05, she concludes that the mean number of transactions is not equal on different days of the week, or at least there is one twenty-four hour period that is different from others. She will want to arrange her staffing so that she has more tellers on some days than on others.

Summary

The F-distribution is the sampling distribution of the ratio of the variances of ii samples drawn from a normal population. It is used straight to test to run across if two samples come from populations with the same variance. Though you will occasionally run across it used to examination equality of variances, the more important use is in assay of variance (ANOVA). ANOVA, at least in its simplest class as presented in this affiliate, is used to test to run into if 3 or more samples come from populations with the same mean. By testing to encounter if the variance of the observations comes more from the variation of each observation from the hateful of its sample or from the variation of the means of the samples from the grand hateful, ANOVA tests to meet if the samples come from populations with equal ways or not.

ANOVA has more elegant forms that announced in later capacity. Information technology forms the basis for regression analysis, a statistical technique that has many business applications; it is covered in later chapters. The F-tables are besides used in testing hypotheses about regression results.

This is also the beginning of multivariate statistics. Find that in the one-way ANOVA, each ascertainment is for 2 variables: the x variable and the group of which the observation is a office. In later capacity, observations will take two, 3, or more than variables.

The F-test for equality of variances is sometimes used before using the t-test for equality of means because the t-test, at least in the form presented in this text, requires that the samples come from populations with equal variances. You will run across it used along with t-tests when the stakes are high or the researcher is a little compulsive.

Source: https://opentextbc.ca/introductorybusinessstatistics/chapter/f-test-and-one-way-anova-2/

0 Response to "If You Calculate an F Statistic and Find That It Is Negative"

Postar um comentário